Getting To Production

If you launched an AI initiative in the past year, the odds of success weren’t in your favor. While there are no definitive timelines for the phases in Gartner’s Hype Cycle of Emerging Technologies, it’s possible that you got caught up in the ‘Peak of Inflated Expectations’ and slid into the ‘Trough of Disillusionment’. But take heart, the ‘Slope of Enlightenment’ is the next stop on the journey. That’s when the benefits should start to show themselves. Maybe, but there’s work to be done.

It may be small consolation, but you aren’t alone. MIT’s 2025 research on the state of AI in business found that 95% of generative AI pilots fail to deliver measurable financial returns. S&P Global reported that 42% of companies scrapped most of their AI initiatives in 2025 and that’s just two of several studies saying essentially the same thing — moving AI to reliable, production-grade status isn’t easy.

Why AI Initiatives Fail

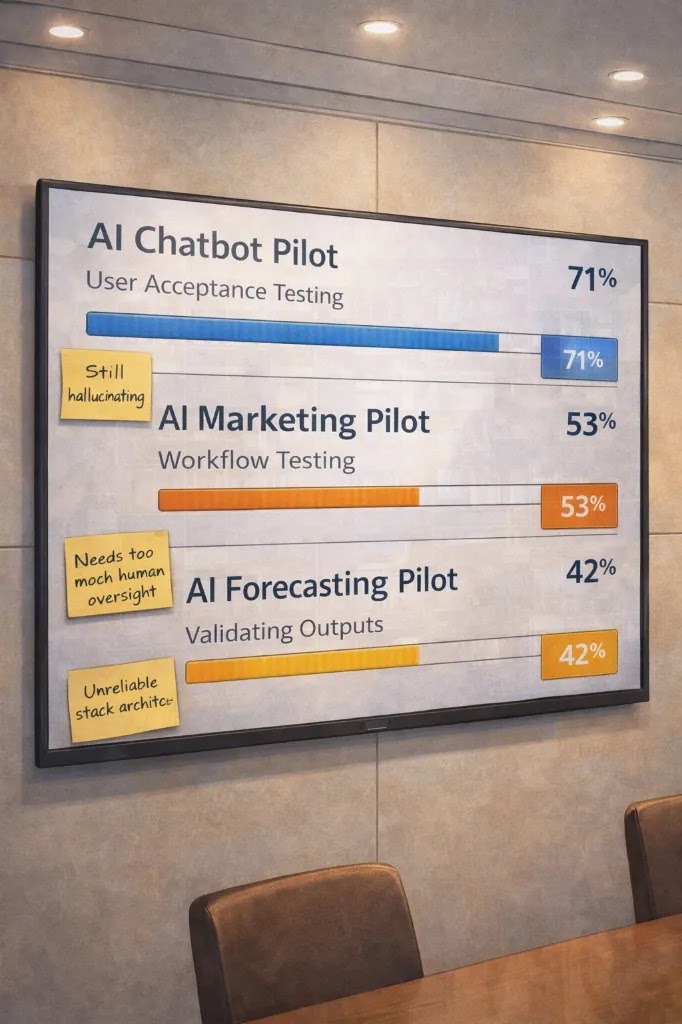

‘Pilot purgatory’ refers to the state where AI pilots or proofs-of-concept (POCs) never reach full-scale production. The research points to three common reasons for failure.

Failure Mode 1: Poor Change Management

How often have you heard somebody in your company say — “but this is the way we’ve always done it”. If that doesn’t make you cringe, it should. The most common mistake you can make, when trying to implement AI is taking an existing, inefficient, poorly documented workflow and trying to simply inject AI into it. Your first step should be a full breakdown of the processes and people involved. AI is a force multiplier. If your underlying process is fundamentally flawed, AI might just break it completely.

The fix is straightforward but requires discipline: before you automate anything, map the current process end-to-end, identify the bottlenecks, dependencies and inefficiencies, and redesign first. Some of the more common causes of failure include poor data quality, lack of oversight, redundant or unnecessary steps, lack of accountability, using workarounds to compensate for systemic deficiencies, and so on. Redesign the workflow with AI as a core component. This is the difference between automation and transformation.

Failure Mode 2: Lack of a Clear ROI Strategy

Too many AI projects start with the question “What can AI do?” instead of “What business problem are we solving?” This is how you end up with impressive demos that have no path to value. APQC’s 2025 analysis confirmed this, identifying the top reasons for AI project failure as inflated expectations and the absence of measurable key performance indicators (KPIs).

In an interview during the Cisco AI Summit in February 2026, Jensen Huang, CEO of NVIDIA, suggested that, when it comes to AI, organizations should “let a thousand flowers bloom” and worry about return on investment (ROI) later. With all due respect to one of the most visionary leaders in technology, this advice is a luxury that most businesses cannot afford. Huang runs a company valued in the trillions. His R&D budget is essentially unlimited. If you’re an owner trying to make payroll every two weeks, you need every dollar of AI investment connected to a measurable outcome. The garden metaphor may work for NVIDIA. It doesn’t work for a 50-person services company.

In my earlier writing on pilot design, I emphasized picking a single SMART metric — specific, measurable, achievable, relevant and time-bound — and using it as the success criterion for a 90-day pilot. Recent research studies have validated this approach. Connecting AI initiatives to clear business outcomes provides verifiable indicators of success or failure. Without them, leadership loses interest and projects die.

Failure Mode 3: Trying To Do Too Much Too Soon

The AI landscape is evolving at a pace that is genuinely unprecedented. Models that were cutting-edge six months ago have already been surpassed. Tools that required custom development are being embedded into existing platforms. The window to capitalize on any specific AI capability is narrower than most businesses realize.

This has a direct implication for how you run pilots. A 90-day trial is already generous. Speed and iteration matter. Taking a minimum viable product (MVP) approach is mandatory. The objective is not perfection on the first attempt. It’s rapid learning that compounds over time.

McKinsey’s 2025 State of AI survey reinforced this, finding that organizations achieving AI success take a fundamentally different approach: measured build-up, then rapid scale. The emphasis is on velocity of learning, not velocity of deployment.

The 70/30 Rule: Why People and Process Outweigh Technology

There’s a finding in Boston Consulting Group’s research that deserves specific attention. They call it the “10-20-70 principle”: AI success is 10% algorithms, 20% data and technology, and 70% people, processes and cultural transformation.

That’s exactly what we’ve been saying at SeventyThirty.AI. Getting AI right is 70% people and process, 30% technology. When it works, something powerful happens—the ratio reverses. AI handles 70% of the routine work and your people keep doing the 30% that matters most—creativity, discernment, and genuine human connection. That’s the Human Premium—and it’s what makes it all work.

Different analysts use slightly different numbers, but the weight distribution is consistent across every credible source I’ve reviewed. If you’ve read my posts on guardrails and governance and culture, you already have a framework for addressing this. The point here is that independent research now confirms, at scale, what AI practitioners have been observing on the ground.

A Practical Path To Success

If you’re stuck, here’s what to do.

First, audit your current AI initiatives honestly. Are they connected to specific business outcomes with measurable KPIs? If not, stop and define them.

Second, look at the process you’re trying to improve. Have you actually redesigned it, or have you just attached AI to the way things already work? If it’s the latter, step back and fix the process first.

Third, assign operational ownership. The person responsible for the AI initiative cannot be someone in IT or data science alone. It must be someone with P&L accountability or direct operational authority who will be measured on the outcome.

Fourth, set a hard deadline. Ninety days to run, evaluate and decide: scale it, pivot it or kill it. No extensions. The discipline of a fixed timeline forces the clarity that open-ended experimentation never will.

Finally, document everything. The outcomes, the failures, the surprises. This becomes your AI playbook — the institutional knowledge that makes your next initiative faster, cheaper and more likely to succeed.

The Bottom Line

Pilot purgatory is not a technology problem. It’s an execution problem. The models work. The tools are available. The economics are favourable. What’s missing, for most businesses, is the discipline to connect AI to real outcomes, the willingness to redesign rather than just automate, and the organizational structure to move at the speed the technology demands.

The good news is that these are solvable problems. They don’t require massive budgets or deep technical expertise. They require clarity, ownership and discipline. It’s your choice.

Sources

This article builds on my previous series on AI adoption for businesses. For the foundational framework — including pilot design, guardrails, governance and culture — visit us at SeventyThirty.AI.

© 2026 by Roy Gowler. All rights reserved.